(T. Benson for VOA)

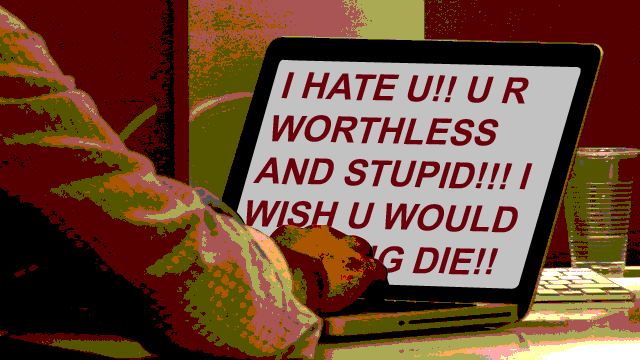

Social media services came under fire recently for not being more proactive in addressing online harassment and violent threats and for removing offensive comments only after being reported. Now some believe the role of social media in overseeing online behavior should change.

“Whoever is in charge of these spaces — that includes the people who are hosting the site, that includes the users on the site, that includes the social media platforms — they have a responsibility to actually try to make their environment such that they are not aggressively targeting and attacking and driving away certain groups of people,” said Mary Anne Franks of Miami University’s School of Law.

She recalls that in its early days, social media “transformed our lives and made available things that were not possible a decade ago.”

Now she asks why the creativity and resourcefulness that made that possible are not being dedicated to getting the most from online communication without the damage that it can do.

Elaine Davies of California’s Chapman University agrees that social media companies should have a “more responsible role.” But she says the problem is that “if one person creates a fake account or they clone someone’s account, they [social media services] don’t know until somebody brings it up; and there should be a rapid response.”

Franks argues that social media sites can be creative about dealing with abusers hiding behind anonymity or similar tools that “foster greater freedom of expression” but also have what she calls a “darker side.”

She points out that Twitter, Facebook and many other services already have policies in place to curb spam, for example, and suggests that they can address abusive behavior “without having to fundamentally alter the kinds of beneficial instruments and ways that people can talk to each other online.”

“And people will quibble about whether that is a violation of free speech or whether it is a positive factor in reducing harassment,” added Johns Hopkins University’s Patricia Wallace, Senior Director of the Center for Talented Youth and Information Technology.

Davis, who believes “the Internet should be for free speech,” questions whether it is even possible for social media companies to proactively police abusive behavior.

Both Davies and Wallace accuse social media companies of being more concerned with making a profit, although Wallace concedes that Twitter and Facebook, for example, have taken down abusive posts and comments in the past.

Twitter and Facebook have clear policies against abusive behavior. While declining an interview request, a Twitter spokesman shared the following statement:

Our rules are designed to allow our users to create and share this wide variety content in an environment that is safe and secure for our users. When content is reported to us that violates our rules, which include a ban on targeted abuse, we suspend those accounts. We evaluate and refine our policies based on input from users, while working with outside organizations to ensure that we have industry best practices in place.

After a recent spike in cyberharassment, Twitter refined its blocking and reporting tools to make it easier for users to report harassment on their mobile phones.

In an interview with TECHtonics, Facebook’s Communications Manager Matt Steinfeld says the company’s community standards state clearly that “harassment, abuse, bullying — things like that — direct attacks from people, are not allowed on Facebook.”

But the abusive behavior needs to be reported to the website administrators.

“We have like 1.3 billion people using Facebook … So it’s really a large neighborhood watch,” he said.

“So if somebody is being bullied or harassed, they are really in the best position to tell us that,” he said, given that “as a third party reviewing things, it’s not always apparent based on the language that that person is using whether they are truly bullying or harassing someone else.”

Because of the difficulty of proactively looking for abusive content, Steinfeld says Facebook makes it “as easy as possible for people to report it to us” and make sure when they do that, that Facebook has “someone looking at it and responding to it. That’s where we put our priority,” he said.

“If it is abusive, if it is harassment, we remove it,” he stressed.

But Jayne Hitchcock, President of Working to Halt Online Abuse, says the victims her organization helps often say they went to Facebook or Twitter or other websites and their complaints were not taken seriously.

“They’ve had friends or relatives file complaints and nothing is being done,” she said. “And the person stalking them or harassing them continues. And then it does get to the point where they do have to go to the police and then hope that the police will believe them and do something. And then it just goes from there. It’s like one big giant snowball.”

Hitchcock argues that social media companies are not doing a “good job” in addressing online abuse. She says victims who filed repeated complaints with Facebook — and their grievance was not addressed — came to her group for help. She questions if the people tasked with reviewing user complaints are qualified.

“It’s one of these things where you wonder, not only in law enforcement, but the so-called abuse people at these websites — if they are properly trained,” she said. “And I don’t think they are.”

She says responding to complaints isn’t enough if they are not taken seriously or if they are passed off without action. She suggests that social media sites might consider “live chat support, post tips on staying safer [by changing their privacy settings or general settings], and being proactive.”

Change is possible, says Franks, although it has to take place on all fronts — legal, social, in the workspace, and on online forums.

She describes harassment and abuse as “fundamental design flaws” and says tech and social media platforms should make structural changes to “inhibit harassment and guard against abuse.”

“To do this, they — and all of us — must reject the tendency to treat social media and technology as though they are natural forces rather than the product of human choices and value judgments,” she said. “Once we recognize this, we can make choices and value judgments that do not merely guard the interests of the privileged, but serve the interests of equality and democracy.”

2 responses to “Should Social Media Police Online Abuse?”

Some woman somewhere decides that the entire world should play by her rules, (I think Hitler made same type of rules), then we all should suffer her incorrigible pampering. If I remember correctly, social influences as a young adult kept other kids from picking their noses and eating the treasures found within.

Say what you want, but only cowards are hurt by what this lady says is a problem…

If they don’t have the means or availability, it’s meaningless gibberish. Does anyone really think that barring people who are serious about causing harm will not accomplish their goals because they are barred from Twitter or Facebook? It’s better to allow those who are not serious to express and vent their frustrations on line then to allow their emotions to irrupt into real violence. People want a social network but don’t want the ills of open forum. The only think the social networks should be required to do is allow blocking and individuals comments to the person[s] intended and a message be sent the person has been blocked permanently. If you don’t see or hear it your not bothered.